Published: Apr 01, 2026

Sovereign AI in Australia: From compliance obligation to competitive advantage

Sovereign AI in Australia is no longer a conceptual discussion. It is defined by enforceable policy obligations across PSPF, ISM, and SOCI that govern how AI systems are controlled, accessed, and audited.

Australia has already crossed the point where AI is experimental. It is now regulated operating infrastructure. The question has shifted from how we adopt AI to how we control it within our operating and regulatory boundaries.

With the National AI Plan, GovAI, and an accelerating policy framework spanning the PSPF, the Information Security Manual, and the Security of Critical Infrastructure Act, the governance baseline is moving faster than most organisations have had the opportunity to examine. Most believe they are progressing because data is stored within Australia. That addresses only one dimension of the problem.

Australian Government policy requires explicit control across AI access, provider risk, and handling of sensitive data, aligned to PSPF and ISM. Insider threat and supply chain integrity are now primary risk considerations in procurement and audit.

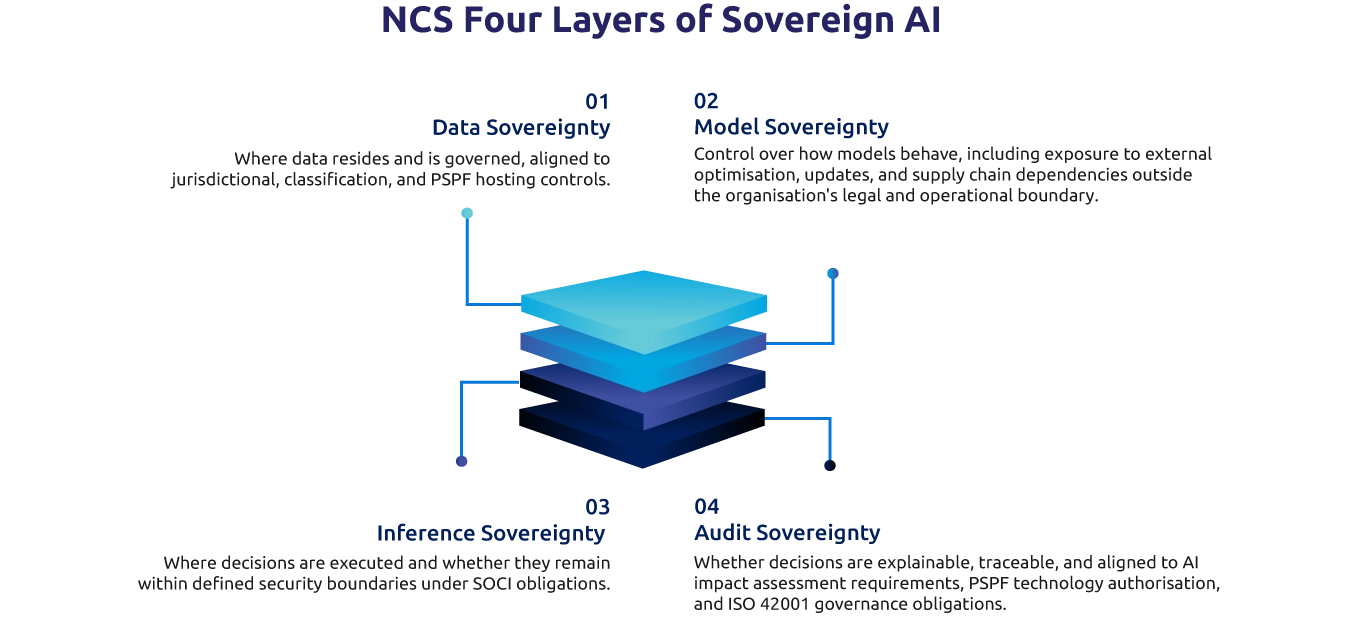

In this context, sovereignty is not defined by where data sits alone, but by who retains control across data, model behaviour, inference, and auditability.

Sovereign AI operates across four control layers. Most organisations have meaningfully addressed only one.

Most organisations do not have full control over how AI systems behave within their own environments. This gap often remains hidden until tested under regulatory or procurement scrutiny. Data residency addresses only one layer. The remaining three determine whether genuine operational control exists.

These are not technical questions. They are decisions about control, accountability, and risk. As AU Government policy obligations continue to strengthen, organisations that have not addressed all four layers face increasing exposure in procurement, compliance, and operational continuity.

This shift is not only regulatory. It is fundamentally commercial.

Australia is unlocking high-value datasets across health, agriculture, and public administration. Access to these opportunities will depend on demonstrated governance maturity, including the ability to satisfy PSPF, ISM, and SOCI obligations in an auditable and repeatable way. This advantage will not be evenly distributed. It will favour organisations that can demonstrate control, auditability, and compliance at scale.

Organisations with defensible sovereign AI architecture will move faster, access more opportunities, and scale with fewer constraints. Those without it will face delayed access to regulated datasets, constrained procurement pathways, and forced redesign under audit conditions.

Getting this right requires a different approach.

AI strategy, Responsible AI, and sovereign architecture must be defined together, not sequentially. Without this alignment, organisations default to fragmented ownership, with technology, risk, and business functions operating on different assumptions about control. This is particularly acute in environments where PSPF, ISM, and SOCI obligations interact and where insider threat and supply chain risk require end-to-end governance rather than point solutions.

How NCS helps.

NCS has worked with regulated organisations to implement AI under strict security, audit, and compliance conditions, including environments where architecture had to meet strict classification, supply chain, and audit requirements prior to deployment. The challenge is not the technology. It is aligning control, governance, and value from the outset, within the policy framework that governs the environment.

In a recent Australian federal government engagement, the initial deployment assumed cloud-based inference within an Australian region. A sovereignty review identified that the specific workload could not satisfy the required security boundary, and that supply chain dependencies introduced exposure not identified during procurement. The architecture was redesigned to separate inference layers by classification level and remove third-party risk before production. The result met both operational and audit requirements, avoiding redesign under regulatory scrutiny.

The opportunity is not just to adopt AI, but to do so in a way that withstands policy, audit, and procurement scrutiny. Organisations that can demonstrate control across all four sovereignty layers will move faster, access regulated datasets sooner, and scale without redesign under compliance pressure.

Organisations should begin by assessing their sovereign AI readiness across strategy, governance, and architecture.

NCS offers an integrated assessment across all four sovereignty layers, aligned to PSPF, ISM, SOCI, and DTA requirements.

One engagement. Full visibility across all four layers. A defensible path to production aligned to AU Government policy requirements.

Data sources: PSPF Policy Advisory 001-2025, Department of Home Affairs, October 2025 | Australian Government Information Security Manual, ASD, June 2025 | Australia's AI Opportunities Report 2025 (OpenAI, ACS, BCA, AIIA) | Australian National AI Plan, December 2025 | DTA Policy for Responsible Use of AI in Government, December 2025 | AWS AU$20B investment, June 2025 | OpenAI and NextDC MOU, December 2025